Build vs. Buy vs. Platform: What Most Enterprise Evaluations Get Wrong

Cost-first evaluations consistently favor options that are easy to start but difficult to sustain. The tradeoffs don’t become visible until later, when the system is already in use and the organization is committed. By then, changing direction is far more expensive than making a better decision upfront.

By Heather Shoemaker, CEO

Table of Contents

The Cost Conversation Happens Too Early

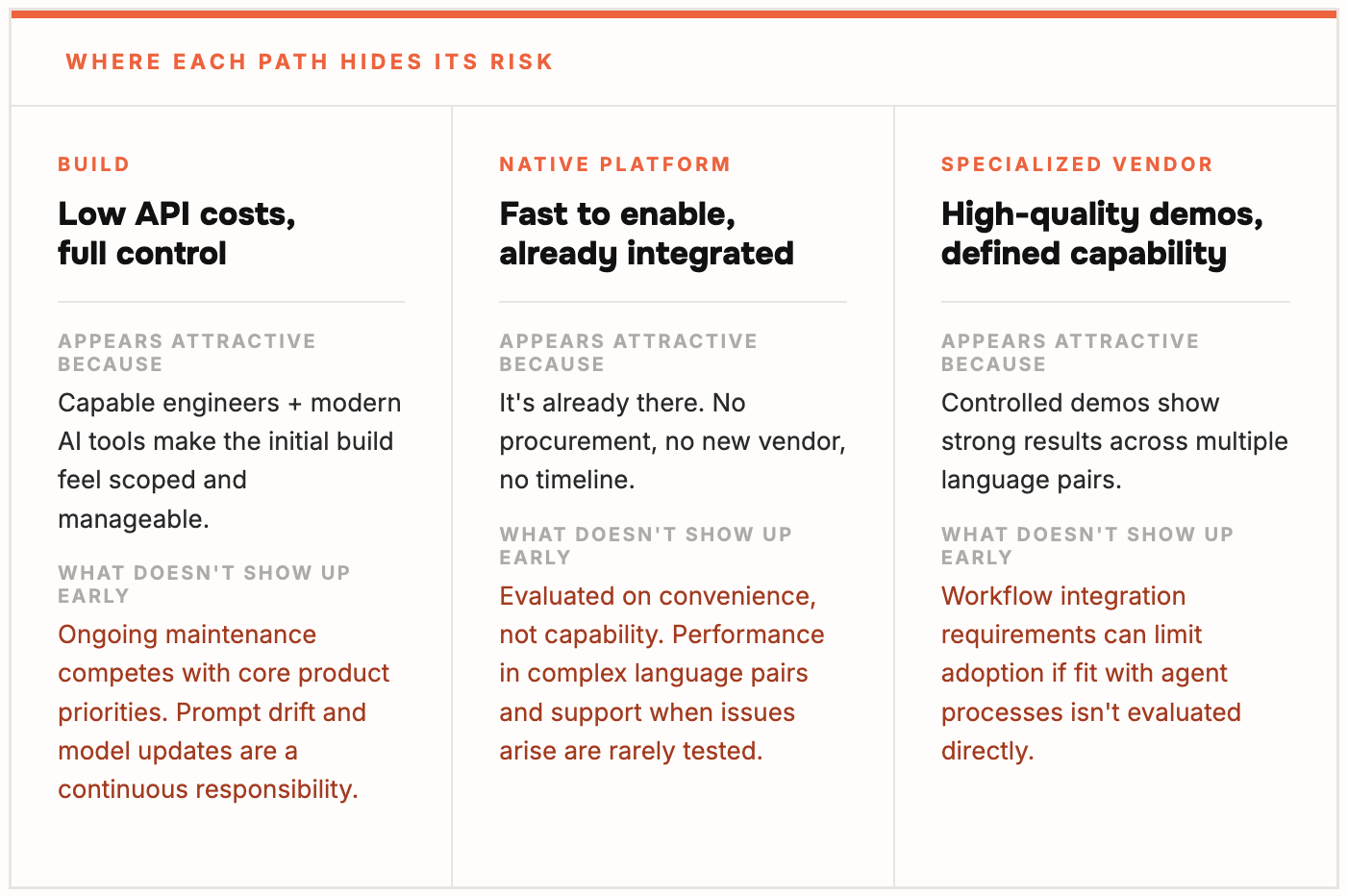

All Three Paths “Work”… That’s the Problem

What Gets Missed in Most Evaluations

Why Most Teams Overestimate Their Options

A Different Way to Approach the Decision

Where the Evaluation Framework Changes the Conversation

Getting to a Decision That Holds Up

Discover More

-

The Hidden Cost of Getting AI Translation Wrong

Everyone in enterprise software is talking about AI translation right now. And for good reason. Large Language Models have fundamentally changed what’s possible in multilingual customer support. The quality ceiling has moved. The language coverage has expanded. The potential is real.

-

Better Translations. Same Trust. Language IO Now Delivers LLM-Powered Translation.

Today I’m proud to announce that Language IO now integrates Large Language Models (LLMs) from Google Gemini and DeepL NextGen directly into our translation engine. For our customers, this means a level of translation quality that traditional models simply cannot match. For prospects evaluating enterprise translation for the first time, it means the bar just…