Why “Just Use an LLM” Breaks Down in Customer Support

Every enterprise exploring AI for customer support eventually arrives at the same fork in the road. One path leads toward building something internally with a model like Gemini or ChatGPT. The other relies on whatever translation capability is already bundled inside the CRM or CCaaS platform. Engineering teams assume the problem is mostly API calls and prompts. Platform buyers assume the built-in feature will be “good enough.”

By Language IO

Table of Contents

The Early Confidence of DIY AI

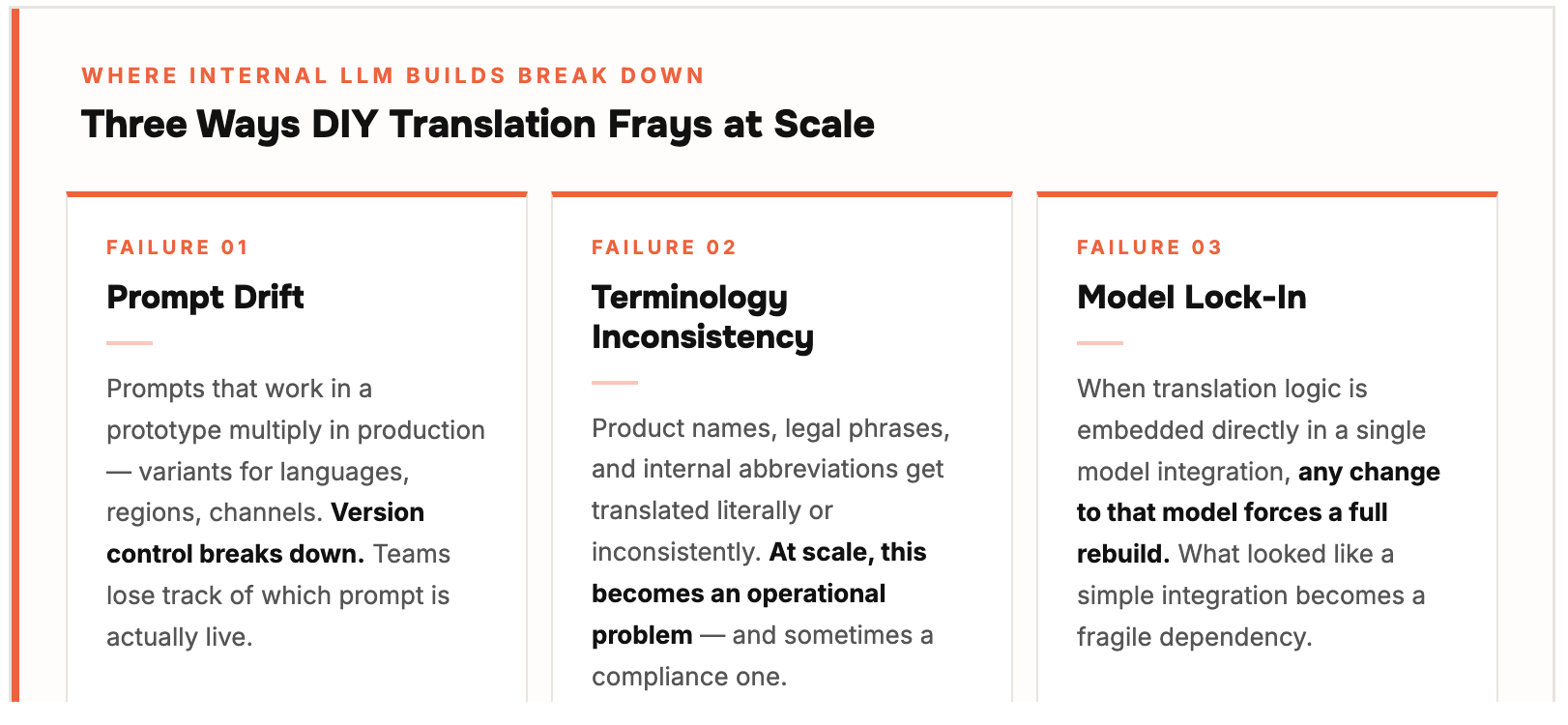

Where Internal Builds Start to Fray

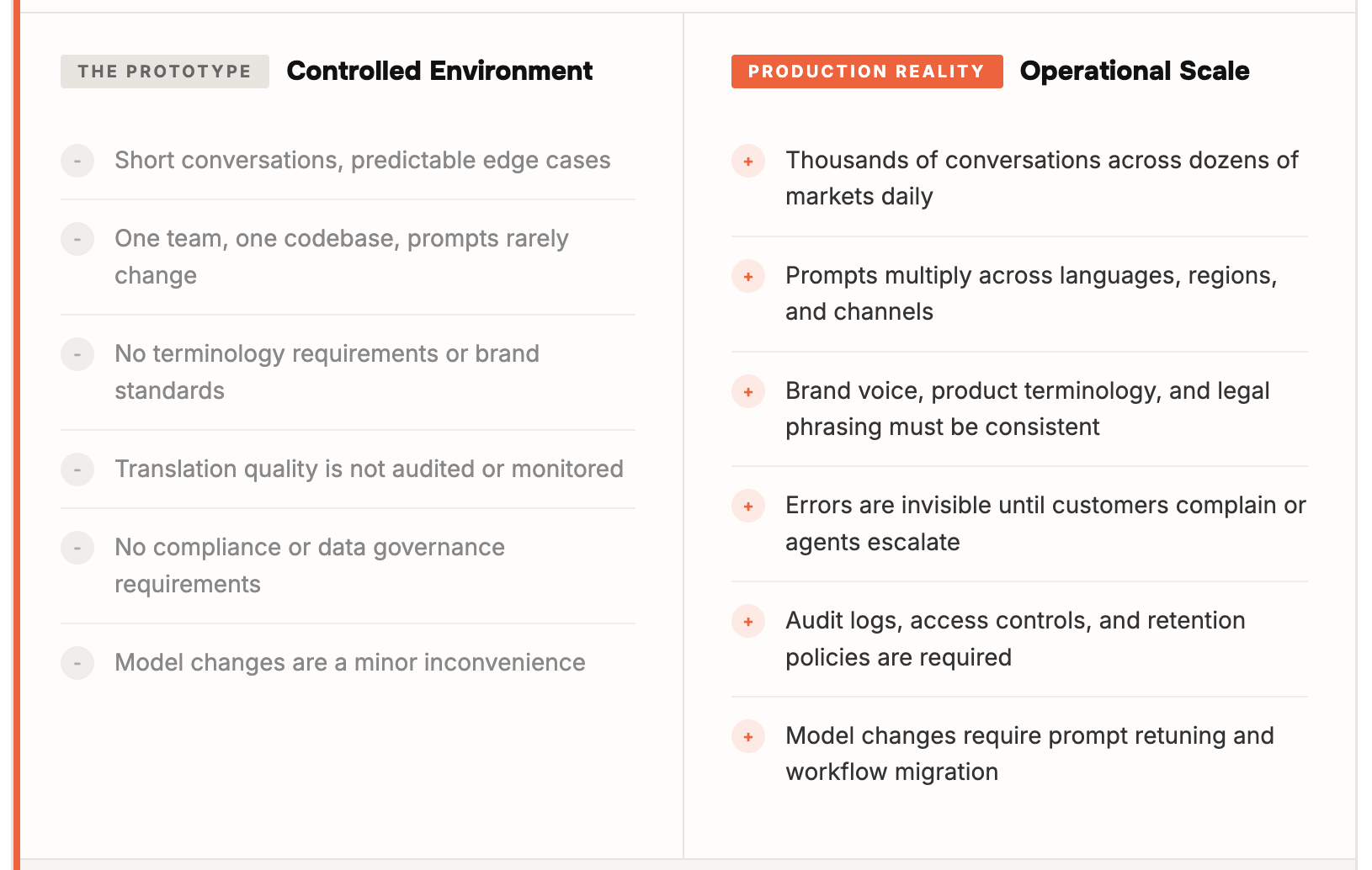

The Scale Problem That Demos Hide

The Illusion of Native Translation

The Missing Layer Most Teams Discover Too Late

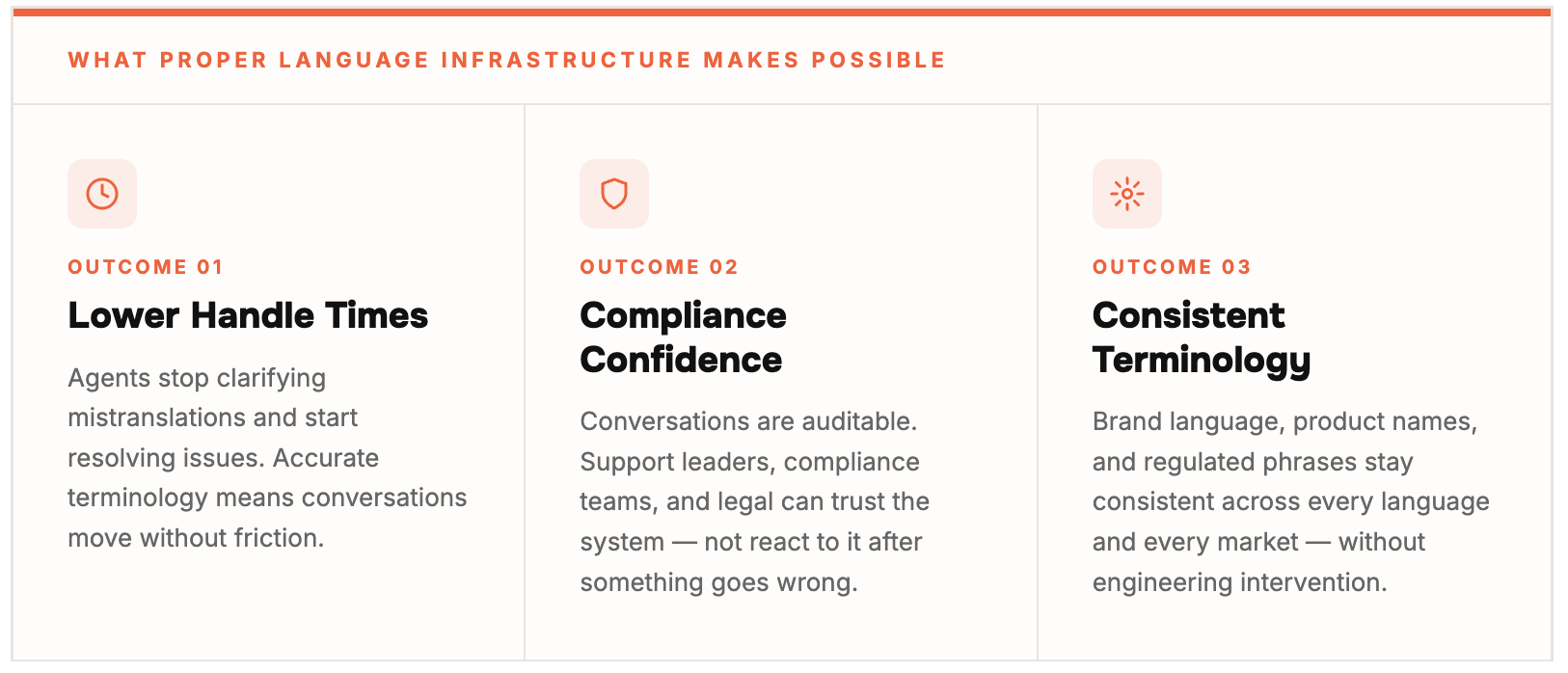

Where the Real Value Shows UP

The Quiet Difference Between Tools and Segments

Discover More

-

The Hidden Cost of Getting AI Translation Wrong

Everyone in enterprise software is talking about AI translation right now. And for good reason. Large Language Models have fundamentally changed what’s possible in multilingual customer support. The quality ceiling has moved. The language coverage has expanded. The potential is real.

-

Better Translations. Same Trust. Language IO Now Delivers LLM-Powered Translation.

Today I’m proud to announce that Language IO now integrates Large Language Models (LLMs) from Google Gemini and DeepL NextGen directly into our translation engine. For our customers, this means a level of translation quality that traditional models simply cannot match. For prospects evaluating enterprise translation for the first time, it means the bar just…