Beyond the Hype: Busting the Biggest Myths About AI in CX

If you lead a customer experience team today, you’ve probably heard every possible take on generative AI from “it’s the future of CX” to “it’s too risky to touch.” Somewhere between the hype and the hesitation lies the truth: AI isn’t coming to replace you or your agents. It’s coming to help you serve customers better, faster, and more personally than ever before.

By Language IO

Table of Contents

If you lead a customer experience team today, you’ve probably heard every possible take on generative AI from “it’s the future of CX” to “it’s too risky to touch.” Somewhere between the hype and the hesitation lies the truth: AI isn’t coming to replace you or your agents. It’s coming to help you serve customers better, faster, and more personally than ever before.

But fear is a powerful thing. Even the most forward-thinking CX leaders are hesitating because they’re worried about brand safety, accuracy, compliance, or simply not knowing where to begin. This hesitation is understandable, but it’s also holding organizations back from the very tools designed to eliminate friction.

The irony? CX leaders, who have built their careers removing friction for customers, are now creating it for their own teams by avoiding AI.

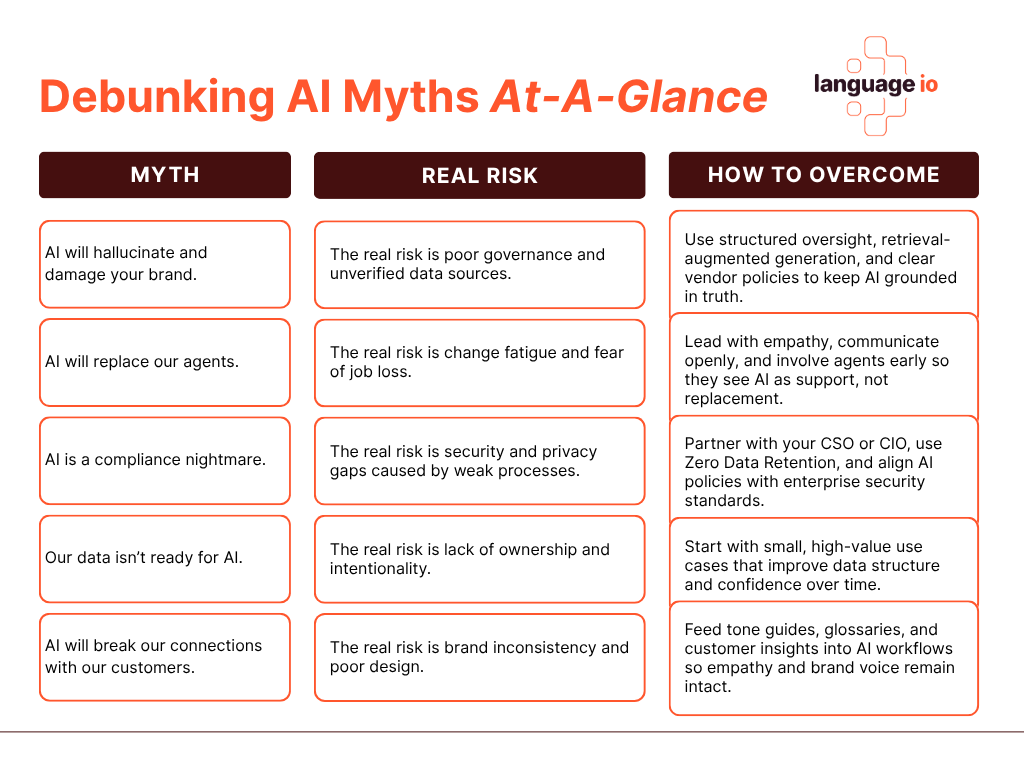

Let’s separate the myths from the real risks and talk about how to adopt generative AI responsibly and confidently.

The Myths

Myth #1: “AI will hallucinate and damage our brand.”

We have all heard the horror stories: chatbots spinning out nonsense or offering tone-deaf replies. Those examples resonate because they tap into a real fear of losing control of your customer voice. In reality, most of these issues come from generic, ungoverned AI systems trained on open internet data.

In a well-designed environment, generative AI does not go off script. When large language models are paired with retrieval-augmented generation, contextual filters, and verified company data, they stay grounded in facts you control. The difference comes down to structure and accountability.

Think of it like onboarding a new agent. You would not hand someone a headset on day one and hope for the best. You would train them, check their work, and give them the tools to represent your brand with confidence. The same principle applies to AI. With the right context and oversight, it becomes one of your most consistent communicators. It is accurate, dependable, and aligned with your tone.

To strengthen accuracy, start with the data. Feed your AI systems clean, up-to-date knowledge bases and glossaries. Tag your most common customer scenarios so the model can retrieve relevant information quickly. In your prompts, set clear boundaries by explaining what the system can use and what it must avoid. Add phrases such as “only reference verified internal data” or “if uncertain, defer to human review.” These small steps build trust and prevent hallucinations before they happen.

Myth #2: “AI will replace our agents.”

Few ideas cause more anxiety in customer experience teams than the thought of AI replacing human agents. It is an understandable fear. Every new wave of automation has sparked concern about jobs and the loss of human touch. The reality, though, is that generative AI is not designed to replace people. It is meant to remove the friction that keeps them from doing their best work.

AI is at its most powerful when it takes on the repetitive, time-consuming tasks that drain energy and focus. It can summarize complex case histories, surface relevant knowledge articles, or instantly translate customer messages into any language. These tools help agents spend less time searching for answers and more time building real connections with customers.

For instance, you want your agents to be problem solvers with empathy, not writing experts. AI can help by cleaning up slang, jargon, and poor grammar before messages are sent, making them clearer and more professional without losing the human tone. This kind of translation optimization smooths the customer experience while giving agents more time to focus on what matters most: solving the issue accurately and compassionately.

The impact goes beyond efficiency. When the most tedious work disappears, agents have more space to listen, empathize, and solve problems creatively. That shift tends to increase both customer satisfaction and employee morale.

Some leaders worry that adding AI will make customer experiences feel robotic. The opposite is usually true. By automating the routine, teams regain the emotional bandwidth that makes service personal. The result is faster responses, higher consistency, and a customer experience that feels more human, not less.

To make this transition successful, transparency is essential. Involve agents early in the process, invite feedback, and explain the “why” behind new tools. When teams understand that AI is here to support them rather than replace them, adoption becomes far smoother and the customer experience becomes stronger.

Myth #3: “AI is a compliance nightmare.”

This is one of the most common fears among CX leaders, and it is not without reason. Customer data is sensitive, and the idea of feeding it into an AI system can feel risky. The truth is that those risks are real only when the wrong systems are used. The real danger is not AI itself but how it is configured, governed, and secured.

The good news is that AI does not have to compromise compliance. In fact, when implemented correctly, it can strengthen it. Secure architectures, zero-data-retention policies, and role-based access controls give organizations more visibility and consistency than many legacy tools ever did. Zero Data Retention means customer data is never stored, never reused, and never available for training. Each interaction exists only long enough to complete the task and then disappears completely. That design eliminates one of the largest sources of risk in enterprise AI.

Transparency also matters. CX leaders should ask how a model is trained, what data is stored, and who can access it. Certifications such as ISO/IEC 42001, which establish clear frameworks for AI management and accountability, can provide additional peace of mind. The goal is not to eliminate risk entirely but to make it measurable, traceable, and well-managed.

AI can even help reduce compliance burden by creating automatic audit trails, flagging potential data exposure, and standardizing how sensitive information is handled across regions. In other words, the same technology that once caused anxiety can become one of your strongest compliance allies.

Finally, make security a partnership. Work closely with your Chief Security Officer or CIO to understand your company’s data policies, and bring them into the process early. Their guidance can help you align AI use cases with existing governance frameworks and ensure that every decision reflects your organization’s security posture. When security and CX leaders move in lockstep, AI becomes a force that strengthens trust instead of testing it.

Myth #4: “Our data isn’t ready for AI.”

This is one of the most common reasons CX leaders delay AI adoption. The logic sounds responsible: if our data is messy, incomplete, or inconsistent, we should wait until it is perfect. But that day rarely comes. Waiting for perfect data is like waiting for a customer queue to disappear—it never really happens.

The truth is that no organization starts with flawless data. The most effective AI programs begin with targeted use cases that help improve data quality over time. Tasks like translation, summarization, or auto-tagging support tickets all make existing data more structured and searchable. Each step feeds the next, creating a gradual improvement loop that strengthens accuracy and performance.

Think of it as building muscle rather than flipping a switch. Every small, well-governed AI project adds structure, context, and metadata to your systems. That work compounds. Before long, what felt like an obstacle becomes one of your strongest assets.

Good data also depends on clear ownership. Identify who manages your customer knowledge bases, product glossaries, and response templates. When these are current and consistent, your AI has the right foundation to operate with precision.

A practical starting point is to focus on data that touches the customer directly. Audit your top fifty support articles, most-used macros, or highest-volume case types. Clean and tag that content first, and feed it into your AI workflows. The early improvements in speed and accuracy will build internal confidence and help make the case for expanding to broader datasets.

Your data does not have to be perfect to begin. It simply has to be intentional, secure, and guided by clear business goals. Starting small, learning quickly, and improving continuously will prepare your organization far better than waiting on a mythical “clean data day.”

Myth #5: “AI will break our connection with our customers.”

This fear runs deeper than technology. At its core, it is about losing the very thing that defines customer experience: authentic connection. CX leaders have spent years building teams, processes, and cultures around empathy. The idea of introducing automation into that relationship can feel like crossing a line.

But connection is not lost through technology. It is lost through poor design and lack of intention. When implemented thoughtfully, AI can actually deepen the customer relationship. It can anticipate needs, translate in real time, and surface knowledge instantly so agents can respond with accuracy and care.

The key is to use AI as an amplifier, not a substitute. When technology handles routine tasks, agents have more space to focus on what humans do best – listening, problem solving, and showing empathy. The result is not distance but closeness, because customers spend less time waiting and more time being understood.

Human connection does not disappear when AI enters the conversation. It disappears when speed and scale are prioritized over sincerity. CX leaders can protect against that by staying involved in how AI is trained, tested, and tuned. Measure not just efficiency, but how interactions feel.

In the end, AI does not break connection. It mirrors the values of the people who design and guide it. If empathy is at the center of your customer experience strategy, it will be at the center of your AI strategy too.

The Real Risks and How To Overcome Them

The myths around AI in CX are powerful, but so are the opportunities. Once you separate fear from fact, what remains are real, manageable risks that every leader can plan for. The difference between risk and reward comes down to intention, governance, and communication.

Real Risk #1: Poor Governance

AI without structure or oversight quickly leads to confusion, inconsistency, and risk. It is not enough to have AI features; you need clear policies for how they are used and by whom. Without governance, good intentions can still create poor outcomes.

How to overcome it:

Start by defining what “responsible AI” means for your organization. Create an internal AI governance group that includes leaders from CX, IT, security, and legal. Document approved use cases, review prompts and workflows regularly, and set clear escalation paths when something goes wrong. Treat AI decisions with the same rigor you apply to financial or compliance decisions.

Governance also means choosing your partners wisely. Every platform now claims to have an AI angle, but not all of them have the depth or domain expertise to do the work responsibly. Look for vendors who are experts in the problem you are trying to solve, not just experts in AI.

Ask direct questions:

- How is data handled and protected?

- Are responses grounded in verified, domain-specific content or generic web data?

- How do you measure accuracy and bias?

- What happens if a model underperforms?

For example, in customer translation, it is not enough for a system to “use AI.” It must also understand context, tone, and brand nuance. Tools built specifically for professional translation workflows are designed to protect accuracy and preserve the customer relationship you have worked so hard to build.

The goal of governance is not to slow innovation but to guide it. With the right structure and trusted partners, AI becomes a safe, scalable part of your customer experience strategy rather than a source of uncertainty.

Real Risk #2 : Lack of Transparency

When people do not understand how AI works, they stop trusting it. That is true for both employees and customers. Agents may worry they are being replaced or monitored. Customers may feel uneasy if they cannot tell who—or what—is responding to them. The result is the same: hesitation and mistrust.

How to overcome it:

Make transparency a foundational value, not an afterthought. Explain clearly how your AI tools operate, what data they use, and when humans are part of the process. Provide agents with clear visibility into what the system is doing and why, so they understand how it supports them rather than replaces them.

When selecting vendors, look for partners who are open about their architecture, data handling, and decision logic. If a provider cannot explain how a model was trained, what data sources it uses, or how it handles errors, that is a red flag.

Ask these questions early:

- Can you describe your data retention policy in detail?

- How are models updated or retrained, and how often?

- What safeguards exist to prevent bias or drift?

- What documentation do you provide to validate performance?

Also, make sure the company is transparent about its expertise. Many providers now market “AI-powered” solutions, but few can show depth in the specific CX challenges you face. For example, in translation, transparency means more than simply declaring “we use AI.” It means being able to show how translation quality is measured, how accuracy is improved through optimization, and how customer tone is preserved across languages.

Transparency builds confidence. When teams and customers understand how and why AI makes decisions, they are far more likely to trust its results. The most effective CX organizations are those where AI is not a black box but a well-lit room everyone can see into.

Real Risk #3: Change Fatigue

Even well-intentioned AI rollouts can fail if the people who use them feel overwhelmed or threatened. CX teams already handle emotionally demanding work, and the arrival of new technology can create anxiety instead of excitement—especially when agents worry that automation might replace them.

How to overcome it:

Lead with transparency and empathy. Acknowledge that job-security fears are real, and talk openly about how AI is meant to help, not replace. Explain which tasks automation will handle and which will always require a human touch. Reinforce the idea that AI is taking over the repetitive, low-value work so agents can focus on what customers truly need: understanding, creativity, and problem-solving.

Training is also key. Involve agents early, invite them to test new tools, and ask for their feedback. When teams see their ideas reflected in the final workflows, adoption happens naturally.

Choose partners who understand the human side of AI adoption. Look for vendors who can help you design tools that genuinely support agents, not just dashboards that track them. Ask:

- How does this tool improve the agent experience?

- What controls allow teams to review and edit AI output before sending?

- What onboarding and support resources are available for our staff?

Even well-intentioned AI rollouts can fail if the people who use them feel overwhelmed or threatened. CX teams already handle emotionally demanding work, and the arrival of new technology can create anxiety instead of excitement—especially when agents worry that automation might replace them.

How to overcome it:

Lead with transparency and empathy. Acknowledge that job-security fears are real, and talk openly about how AI is meant to help, not replace. Explain which tasks automation will handle and which will always require a human touch. Reinforce the idea that AI is taking over the repetitive, low-value work so agents can focus on what customers truly need: understanding, creativity, and problem-solving.

Training is also key. Involve agents early, invite them to test new tools, and ask for their feedback. When teams see their ideas reflected in the final workflows, adoption happens naturally.

Choose partners who understand the human side of AI adoption. Look for vendors who can help you design tools that genuinely support agents, not just dashboards that track them. Ask:

- How does this tool improve the agent experience?

- What controls allow teams to review and edit AI output before sending?

- What onboarding and support resources are available for our staff?

A thoughtful rollout builds confidence. When agents feel included, informed, and supported, fear turns into curiosity. That shift is what transforms technology from a source of stress into a source of strength.

Real Risk #4: Brand Inconsistency

Every customer interaction shapes how people feel about your brand. That means even small inconsistencies – an awkward tone, a mistranslated phrase, or a message that sounds slightly “off”- can chip away at trust. When AI tools produce content that lacks emotional awareness or brand alignment, the experience can feel disconnected and impersonal.

How to overcome it:

Brand consistency begins with clarity. Make sure your organization has a well-documented tone guide, brand glossary, and library of approved messaging. These resources should be accessible not just to your agents but to your AI systems as well.

Feed those materials directly into your workflows so AI has the same context your people do. For example, a translation model should recognize your product names, understand your brand voice, and adapt tone appropriately for different markets. That context helps protect your brand identity while keeping communication natural and authentic.

- How do you ensure tone and intent stay consistent across translations?

- Can your system recognize branded terms and avoid mistranslating them?

- How do humans review or train the model to learn our specific brand voice?

The best AI tools act like your most experienced agents: they understand not only what to say, but how to say it. When designed this way, AI doesn’t dilute your brand. It reinforces it, extending your company’s personality into every interaction with precision and care.

Real Risk #5: Security and Privacy Gaps

Security is the foundation of customer trust. Even the most advanced AI strategy can collapse if data is mishandled or exposed. For many CX leaders, this is the fear that keeps them up at night—what happens if sensitive customer information is accidentally stored, leaked, or used for model training?

How to overcome it:

Treat AI security as an extension of your existing data governance, not a separate issue. Partner early with your Chief Security Officer or CIO to ensure alignment on architecture, compliance, and vendor selection. Review where your customer data flows, who has access to it, and what happens once an interaction is complete.

Prioritize solutions with Zero Data Retention, where customer data is never stored, reused, or available for training. This principle eliminates one of the largest sources of risk in AI adoption. Once a task is complete, the data disappears, leaving no lingering trail that could compromise privacy.

Ask vendors to be explicit about their security practices:

- How is data encrypted in transit and at rest?

- What third-party audits or certifications validate your compliance?

- How do you prevent inadvertent data capture during AI processing?

- Where, physically and legally, is the data processed?

True AI readiness means protecting trust at every layer. The CX function often sits closest to sensitive customer information, which means it carries both the privilege and responsibility of stewardship. By making security a shared discipline across teams—CX, IT, and legal—you create a culture where innovation and safety move together.

When customers know their information is handled with care, they are far more open to engaging through AI-assisted channels. The safest environments are not the ones that avoid technology, but the ones that design for privacy from the start.

From Fear to Framework

Final Thoughts

Every major shift in customer experience has started with fear. When IVRs first appeared, leaders worried they would make service feel cold and robotic (and they can if not appropriately implemented). When live chat launched, some thought customers would never embrace typing over talking. Even email support was once seen as risky becasue it was too slow, too impersonal, too prone to misinterpretation.

Yet over time, each of these technologies became part of the CX fabric. They didn’t replace human connection; they redefined it. They gave customers choice and gave agents more time to focus on complex, high-value interactions. What once looked like disruption became the new standard for care.

Generative AI sits at that same turning point today. It inspires both excitement and unease, and rightly so. It touches every part of the customer relationship including language, tone, data, and trust. But just like the tools that came before it, AI’s long-term impact will be shaped not by the technology itself, but by the intentions of the people guiding it.

CX leaders have always been the architects of empathy at scale. They know that tools are only as human as the hands that use them. The same is true for AI. When deployed thoughtfully with governance, transparency, and security at its core, AI becomes an extension of your values, not a threat to them.

The next era of customer experience will belong to the organizations that balance innovation with integrity. They will experiment boldly, but never carelessly. They will protect customer trust as fiercely as they pursue efficiency. And they will see AI for what it truly is: the next natural step in a long history of technologies that made connection possible in new and better ways.

Fear always comes first. Then practice. Then progress. And soon enough, what feels unfamiliar today will simply be how great customer experience gets done.

The next era of customer experience will belong to the organizations that balance innovation with integrity. They will experiment boldly, but never carelessly.

Discover More

-

Better Translations. Same Trust. Language IO Now Delivers LLM-Powered Translation.

Today I’m proud to announce that Language IO now integrates Large Language Models (LLMs) from Google Gemini and DeepL NextGen directly into our translation engine. For our customers, this means a level of translation quality that traditional models simply cannot match. For prospects evaluating enterprise translation for the first time, it means the bar just…

-

Build vs. Buy vs. Platform: What Most Enterprise Evaluations Get Wrong

Cost-first evaluations consistently favor options that are easy to start but difficult to sustain. The tradeoffs don’t become visible until later, when the system is already in use and the organization is committed. By then, changing direction is far more expensive than making a better decision upfront.