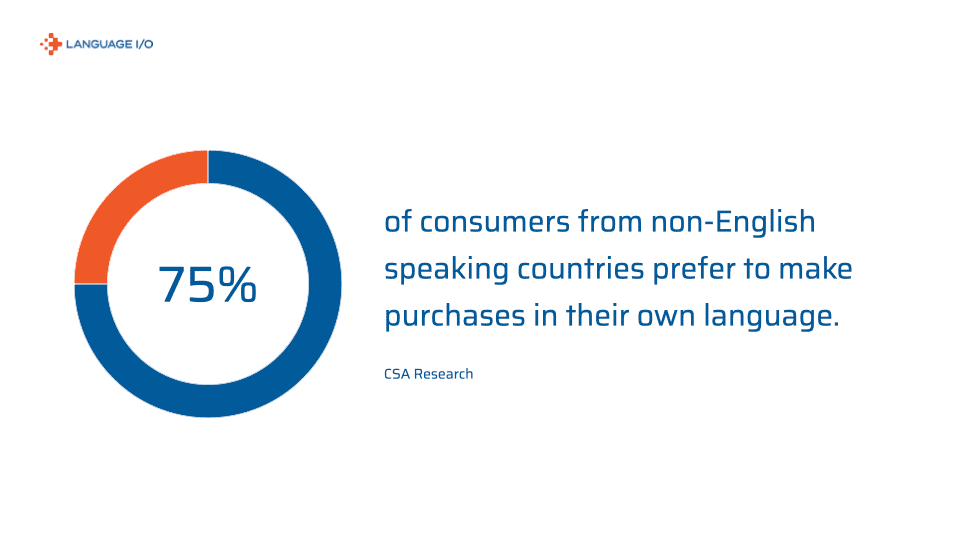

When personalization, speed, and language inclusivity define customer loyalty, it’s no longer acceptable for enterprise brands to default to English-only support.

Consumers don’t just prefer service in their native language—they expect it.

Yet many companies are still relying on generic bots and Google Translate to address the problem, hoping it will work.

It’s time to rethink the multilingual customer experience not as a localization project, but as a core pillar of enterprise CX strategy.

The true test of an intelligent chatbot isn’t how well it speaks English—it’s how well it understands humans in any language.

TL;DR

Multilingual chatbots are no longer a luxury—they’re an enterprise necessity.

Future-ready CX encompasses multilingual voice and text capabilities.

Global customers expect support in their native language.

Generic solutions like Google Translate aren’t enough.

Innovative brands are adopting translation layers that integrate with existing bots.

Why Multilingual Isn’t Optional—It’s Foundational

AI that doesn’t speak your customer’s language isn’t intelligent—it’s incomplete.

Today’s enterprise isn’t judged just on how fast it responds, but how well it understands. That understanding has to transcend languages, cultures, typos, slang, and nuance. It must scale globally while remaining contextually relevant locally.

That’s not a nice-to-have. That’s table stakes.

Bundle: The Ultimate Guides to Multilingual Customer Support

This bundle offering contains three guides covering how to best provide multilingual support over live chat, email, and chatbots.

At Language IO, we don’t view multilingual support as an add-on to your chatbot—we see it as the core of enterprise-ready conversational AI.

Why? Because:

- You shouldn’t have to train ten bots to go global.

- You shouldn’t sacrifice tone, brand, or context just to go fast.

- You shouldn’t treat translation as a fix—it should be built in from the start.

- You shouldn’t compromise the security of your customers’ information.

The next generation of enterprise AI will be measured by its ability to operate seamlessly across languages, channels, and cultures.

Multilingual isn’t just a checkbox. It’s a strategy. It’s infrastructure.

It’s how you make AI actually conversational.

3 Business Benefits of Multilingual Live Chat

A business that uses a chatbot in different languages has the edge over its competitors. Here are three business benefits of multilingual live chat:

1. Global Reach Without Global Payroll

Scaling customer support internationally doesn’t need to involve ballooning headcount. With a multilingual chatbot, enterprises can automate Tier-1 queries across markets and time zones without hiring native speakers for every region. The result? Faster expansion, hiring for skills rather than language, and consistent CX worldwide.

2. Boosted Customer Loyalty and Retention

Providing multilingual support signals a commitment to your global customers. It reduces friction, builds trust, and shortens sales cycles—critical metrics in competitive industries like SaaS, fintech, and retail.

3. Cost-Efficiency With Higher Accuracy

Automating multilingual support doesn’t just reduce agent workload—it also improves precision. Language IO, for example, ensures domain-specific glossary enforcement and tone consistency, thereby preventing brand damage caused by poor translations. This saves money not only on labor but on error correction and reputation management.

Unlike single-language bots duplicated across regions, a layered translation approach avoids training waste and dramatically reduces time-to-deploy.

Use Cases for Multilingual Chatbots

Let’s take a look at the use cases for how multilingual chatbots can be used to benefit both companies and their customers.

1. Enterprise Customer Support

For global B2B and B2C enterprises, multilingual chatbots extend high-quality service across every market. Think 24/7 troubleshooting for a software product in German, or assisting users with password resets in Japanese. Even escalations are smoother when initial triage is multilingual.

2. Revenue-Generating Conversational Commerce

A multilingual chatbot doesn’t just support customers—it can sell to them. By combining product recommendations with localized upsells, enterprises can enhance average order value (AOV) and conversion rates in non-English markets. This is especially valuable in high-growth regions like LATAM, EMEA, and APAC.

3. Internal Support and HR

Global HR teams are turning to multilingual bots to streamline employee onboarding, benefits enrollment, and policy FAQs. Employees get answers instantly in their preferred language, while HR saves hours per week on repetitive queries.

It’s not just about customer service—multilingual AI builds operational fluency across every internal function.

How to Make Your Chatbot Multilingual

Many organizations already recognize the need to provide multilingual customer support, but they encounter challenges when attempting to implement it. 81% of companies find the process of training a single chatbot more difficult than expected, on account of the significant time and resources required to train and deploy a chatbot.

Duplicating this effort across multiple languages becomes cost- and time-prohibitive for many organizations, resulting in abandonment rates of 40%. Many teams also discover that tools like Google Translate fall short—supporting your multilingual customer base requires more nuanced, enterprise-grade solutions.

So, how can brands achieve providing multilingual support on chatbots without running into these same obstacles?

The answer: deploy a solution that layers itself between the chatbot and a machine translation resource. This removes the need to train a new chatbot for each language your customers speak, while still enabling your chatbot to provide support to your global customers.

At Language IO, our solution does just that by seamlessly integrating with your existing chatbot and generating accurate, machine-translated content tailored to your business and data. This significantly reduces the time required to set up a multilingual chatbot while achieving the same goal of providing multilingual customer experiences.

Additionally, with our solution, chatbots in other languages can achieve the same level of accuracy as those in the language in which they were first built.

What Should a Cross-Language Chatbot Include?

We’ve looked at the benefits of multilingual chatbots and the contexts in which they can be used to great advantage. But how do you make a chatbot multilingual, and what should it include?

1. Multilingual NLP Engine

Wondering how to create a multilingual AI chatbot? Your bot needs to understand what users mean, not just translate words.

NLP capabilities should support idioms, misspellings, and regional slang across languages.

The best multilingual bots are built on multilingual LLMs that can interpret intent, even when the customer’s message is full of ambiguity or unconventional phrasing—a frequent reality in real-world support interactions.

2. Custom Glossary and Brand Lexicon

Enterprise-grade bots must speak your brand’s language. Glossaries ensure accurate translations for product names, promotions, and jargon (e.g., translating “free” as “bonus” instead of “no cost” in marketing contexts).

Incorporating brand lexicons also protects your voice, ensuring consistency across markets and preventing tone dilution that can occur in literal translation engines.

3. Smart Dialogue Management

How to make a multilingual chatbot? Context retention across languages is key. Dialogue managers must maintain thread continuity, even when users switch topics or mix languages mid-query.

Advanced systems should also recognize follow-up intent in different languages. For example, if a user switches from asking about a product to asking about shipping, in another language, the bot should retain that conversational memory.

4. Flexible Integrations

Your bot should connect seamlessly with Salesforce, Oracle, Zendesk, or your custom stack. No enterprise has the time or appetite for isolated systems.

Multilingual capability must be an invisible enhancement to your current workflow, not a rebuild. Look for solutions that integrate translation where your agents already live, not outside of it.

5. Compliance and Data Security

If you operate in regulated industries, your chatbot must meet standards like GDPR, HIPAA, or PCI DSS—even when translating user data.

Multilingual bots should be built with data residency, encryption, and access controls that treat translated content with the same sensitivity as the original message.

Failure to do so can turn translation from a convenience into a compliance risk.

The Future of Multilingual Conversational AI

The next evolution isn’t just chat. It’s voice-first, multilingual, context-rich AI that understands emotion, slang, and nuance—and delivers real-time resolution without losing brand consistency.

As Sundar Pichai, CEO of Google, stated, “AI-generated translation will preserve the original speaker’s voice, tone, and expression.” This reflects the growing expectation that multilingual AI won’t just translate accurately—it will communicate authentically.

Voice interfaces are already dominating smart devices and emerging in enterprise CX.

Global enterprises must prepare for a multimodal world where support can start via voice, transition into chat, and conclude via email—all in the customer’s preferred language. The brands that win will be those who architect their AI ecosystems with localization, speed, and security baked in from day one.

Key Takeaways

Multilingual chatbots aren’t futuristic anymore—they’re a competitive differentiator right now. With the right tech stack (and the right translation layer), businesses can deploy bots that talk to anyone, anywhere, without sacrificing quality.

Language IO enables you to skip the pain of training a new bot for every market. We layer multilingual capability onto your existing systems, making global CX simple, scalable, and accurate. Ready to meet your customers in their language? Start your free trial today.